Leading edge semis are seeing an unprecedented boom in demand. This supply tightness is leading to annual price hikes and peak margins for TSMC despite rising input costs. However, as even the mighty TSMC will not be able to meet demand, leading semi designers are now looking at second tier foundries such as Samsung, Intel, and even Japanese startup Rapidus to meet demand. This is Deutsche on the current environment in the run-up to the TSMC call:

“We are minded to believe reports that TSMC has completely sold out of N2 and A16 capacity for 2028 due to over-whelming demand. The best tell is that we believe even Rapidus is attracting interest from tier-1 clients for leading-edge foundry, not just Samsung and Intel. This means that decisions made some time ago now will have a significant impact on market shares for leading fabless companies and that allocation (and yield) is now the key criterion of success. Those facing unanticipated demand may then be forced to consider alternatives, whether they like it or not. In such an environment, TSMC is likely to face difficulty serving incremental demand in the short-run, leaving pricing as the key methodology for rationing supply, for example via hot runs. It is no wonder that there are reports that sub-5nm nodes will see four consecutive years of price hikes of 5-10% pa, starting in 2026. This brings into view the possibility of TSMC sustaining a mid-60s gross margin (vs cons: 62- 63% and 60% last year) despite Arizona dilution and cost pressures (power, GAC). And yet, forcing customers elsewhere even if just for advanced packaging (eg EMIB) is unsettling and brings risk. As a result, we continue to expect TSMC’s traditional 95% leading-edge share to head down to 90% or potentially even lower, but regard that as normal in a very tight environment.”

We agree with Deutsche that leaving room to competitors to grab market share introduces some risks, as it will give competitors more cash and know-how to better compete going forward. TSMC is aware of this and is now stepping up N3 capacity around the globe coming online in 2027, this is CC Wei on the TSMC call:

“Historically, we do not add additional capacity to a node once it has reached its target capacity. However, as a foundry, our first responsibility is to provide our customers with the necessary capacity to unleash their innovations. Based on our assessment, to meet the strong demand in AI application, we are stepping up our CapEx investment to increase our N3 capacity. Thus, we are now executing a global capacity plan to support the robust multiyear pipeline of demand for 3-nanometer technologies, which are used by smartphone, HPC AI, including HBM-based dies, automotive and IoT customers.

In Taiwan, we are adding a new 3-nanometer fab to our GIGAFAB cluster in Tainan Science Park. Volume production is scheduled for the first half of 2027. In Arizona, our second fab will also utilize 3-nanometer technologies. Construction is already complete and volume production will begin in the second half of 2027. In Japan, we now plan to utilize 3-nanometer technology in our second fab and volume production is scheduled in 2028.”

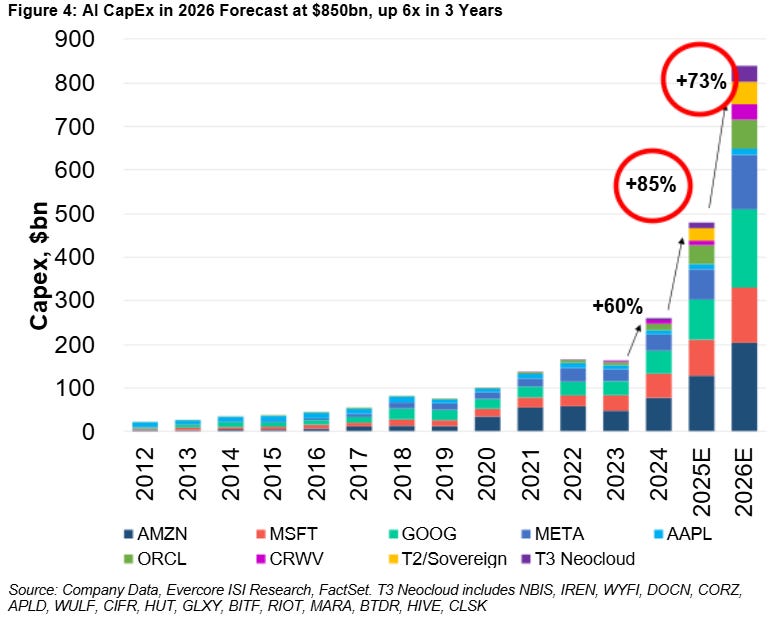

While many were already screaming bubble in ‘23, consensus forecasts for AI demand have been consistently too low over the last three years. We also know that current installed capacity is not able to meet token demand, despite the boom in AI capex over the last two years:

Our central thesis is that we’re still in the very early stages of what AI can do, similar to 1998 when it comes to the rise of the internet, i.e. three years after the Netscape IPO. In subsequent decades, we saw massive new markets and companies being formed on top of this global network, from search to e-commerce, social media, the cloud, SaaS, the sharing economy, video streaming, etc.

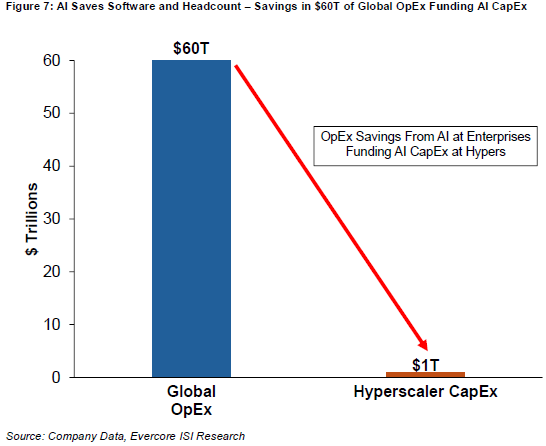

To gauge long term AI demand, one way would be to estimate how much of global corporate expenses will be reoriented towards token consumption. For example, if we’re heading towards 10% of global opex being redirected towards AI workloads, this would mean $6 trillion in annual AI opex. Which can either come in the form of purchasing tokens over API—both from closed source models such as Claude and Gemini, or from open source models such as Qwen and Kimi— purchasing tokens within existing SaaS systems, or purchasing GPUs to host your own customized models.

If long term AI demand goes towards a ratio of 10% of global opex, we probably need about $15 trillion in AI capacity. Assuming this equipment has a lifetime of six years, this translates into around $2.5 trillion in required annual AI capex.

Personally, we think that long term AI demand could easily be higher. For example, 10% of global GDP being accelerated by AI workloads would mean $13 trillion in annual AI demand. This would require $32.5 trillion of AI capacity, or $5.5 trillion of annual AI capex.

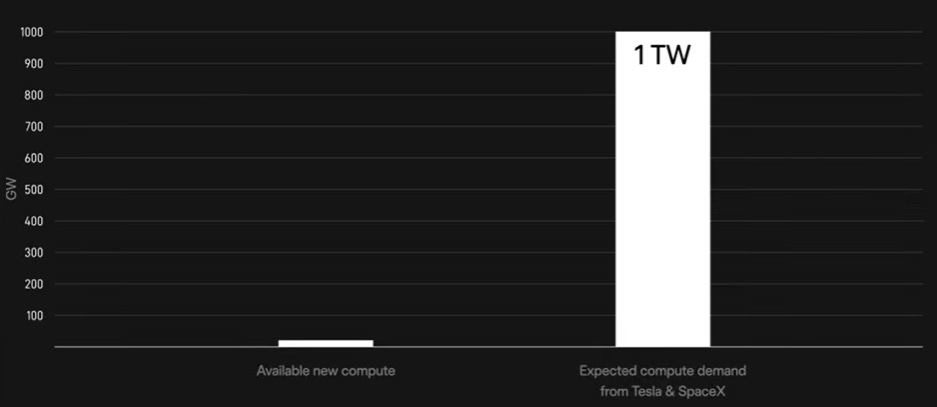

This is why Jensen on a recent podcast mentioned that energy will be the biggest bottleneck to scaling up AI capacity. At $30 billion of investment per GW, $15 to $30 trillion of AI capacity would require 500GW to 1TW of power. The entire installed power capacity of the United States today is roughly 1.2 TW. The world combined sits at roughly 8.5 TW. So, we would need another 0.5x to 1x the capacity of the US power grid dedicated to AI compute.

Elon arrived at a similar calculation—although his estimate is that Tesla and SpaceX alone would need 1TW of compute. Roughly 80% of the compute of the initial fab with Intel is destined for SpaceX—which recently acquired xAI—to build space-based AI satellite constellations. The remaining 20% will focus on edge inference ASICs for Tesla Full Self-Driving, Cybercab robotaxis, and Optimus humanoid robots.

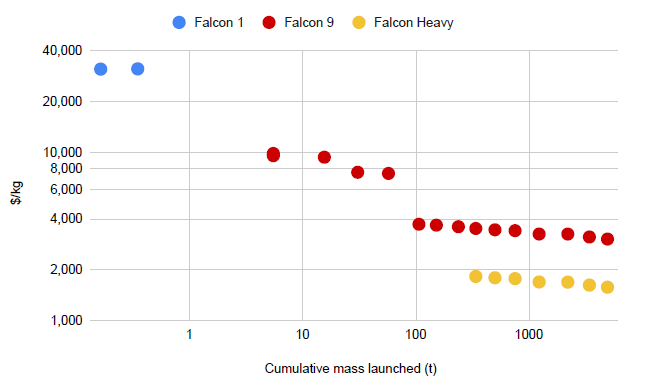

Putting AI data centers into space will be the key investment angle to IPO SpaceX at an astronomic valuation. SpaceX does have a strong position here—the company is transforming into a vertically integrated AI company. SpaceX will manufacture the GPUs, CPUs and Memory in its jointly operated fab(s) with Intel, build the satellites, and then launch this compute into space with Starship at the lowest cost in the industry. The $ cost to put a kilogram into space continues to fall and is estimated to drop below $100 with Starship—Elon has mentioned a target of $20 per kg.

To run Terafab, Intel will bring in the know-how of 14A manufacturing and advanced packaging such as EMIB, while SpaceX will bring in raised capital from its upcoming IPO. It sounds like Intel will bring in the IP, and then the fab(s) will be owned and operated by SpaceX. However, Intel has substantial shell capacity available and if SpaceX needs to scale up production down the road, it’s also possible that Intel can finally fill these fabs and then supply silicon to SpaceX and Tesla as a foundry. This is Musk on this week’s earnings call on the Terafab:

“Terafab is not some sort of mechanism to generate leverage over our chip suppliers. It’s just literally, we don’t see a path to having a sufficient quantity of AI chips down the road. As we scale production to high levels, just the rate at which the industry is growing in logic, but even more so in memory, we just anticipate hitting a wall if we don’t make chips ourselves. So that’s the reason for the Terafab.

We do have some ideas for how to make maybe radically better AI chips. And these are kind of research ideas, which means like long shot, but if long shot pays off, it’s maybe a giant improvement. And it’s just easier to do that if we have our own research fab and are developing our own production technologies. If you look long term at having AI satellites, making chips for those, no way the existing industry can keep up with that. It’s impossible.

So Intel is excited to partner with us on some of the core manufacturing technologies. We plan to use Intel’s 14A process, which is state-of-the-art, and in fact, not yet totally complete. But by the time Terafab scales up, 14A will be probably fairly mature or ready for prime time. So we think it’s going to be a great partnership.

In the near term, Tesla will be building the research fab on our Giga Texas campus. This is something we expect to be probably a $3 billion-ish initiative and capable of maybe a few thousand wafers per month, but it’s really intended to try out ideas. We have some ideas for improving the fundamental technology of how chips are made and there is some new physics we’d like to test out, but we also want to test out the ability to see if something is working in production. So you need a few thousand wafer starts a month to make sure that a production process is sound.

Then, SpaceX is going to take care of the initial phase of the scaled up Terafab. Any kind of intercompany thing has to be approved by both the SpaceX and Tesla Board of Directors. It has got to go through a conflict resolution because we’ve got to make sure Tesla shareholders are served and SpaceX shareholders are served, and then strike the right balance there. So it takes a while to work through the kind of independent director reviews on this.

So, Tesla is doing the research fab, SpaceX is doing the initial part of the large-scale Terafab, and then we got to figure out the rest.”

Besides a great new relationship, Intel is also finally seeing demand growth for its own silicon. Agentic AI requires code execution such as Python, web scraping, database querying etc., while GPUs are terrible at these types of sequential tasks. CPUs happen to excel here. This is Lip-Bu on the Intel call:

“Xeon server demand is seeing strong and sustained momentum. Customers are deploying server CPUs along accelerators in the ratio that is moving back towards CPU. The accelerator remains central to Frontier AI, but the backbone of AI computing in production remains a CPU anchored architecture. That is good news for the x86 ecosystem, and it is a structural reason I’m confident that the CPU franchise will continue to be a meaningful growth engine for the company in the years ahead.”

This should provide both Intel and AMD a nice tailwind in the coming years. However, long term, the biggest winner from this trend will be ARM. ARM is the key CPU partner of Nvidia while also hyperscalers continue to move workloads to custom CPUs based on ARM cores. Advantages are that ARM-based CPUs offer better power-to-performance ratios while hyperscalers can fully customize the design for their particular cloud workloads.

However, short term, Intel will see the biggest tailwind from this. As we saw above, TSMC doesn’t have enough capacity while we know that Intel’s fabs are running at low utilizations, by looking at their gross margins of 37% and also their revenues, which are down more than 30% since 2022. So, in the coming years, Intel should have the highest EPS delta to agentic AI benefitting server CPU demand.

Due to the shortage in power generation, any company that can generate electricity for AI data centers will continue to see strong demand growth. This is JP Morgan in their recent initiation on gas turbine supplier GE Vernova:

“The transformation since then has been dramatic as large gas turbine production capacity has emerged as a critical bottleneck in the AI-driven power and grid infrastructure buildout. GEV’s gas turbine order book is effectively sold out through 2029 (~83GW under contract at YE25, up 28GW from 55 GW at June 30) even as annual production capacity ramps to ~20GW by mid-2026, while Electrification backlog grew $11 billion year-over-year to $35 billion, and the Prolec GE acquisition adds captive transformer manufacturing in a globally supply-constrained market. This demand environment translates directly into clear visibility to margin improvement and free cash flow growth over the next 3-5 years as higher-priced backlog converts to revenue.”

We can see the same boom in demand for all other suppliers exposed to data center construction:

Overall, our view is that the market currently continues to strongly underestimate long term AI demand. Nvidia and other AI data center growth stocks have been climbing a wall of worries over the past three years, with continued questioning of what will drive these share prices higher (the answer is simple – EPS growth) and whether AI investments will turn out to be a bubble. The obvious answer is no, as all GPU capacity continues being taken up and is generating tremendous revenue growth for both AI model and cloud providers. Next, we will go through our key stock picks.